Welcome to the LIVE-ShareChat Video Quality Assessment Database

LIVE-ShareChat Video Quality Assessment Database

Introduction

We conducted a large-scale subjective study of the perceptual quality of User-Generated Mobile Video Content on a set of mobile-originated videos obtained from the Indian social media platform ShareChat. The content viewed by volunteer human subjects under controlled laboratory conditions has the benefit of culturally diversifying the existing corpus of User-Generated Content (UGC) video quality datasets. There is a great need for large and diverse UGC-VQA datasets, given the explosive global growth of the visual internet and social media platforms. This is particularly true in regard to videos obtained by smartphones, especially in rapidly emerging economies like India. ShareChat provides a safe and cultural community oriented space for users to generate and share content in their preferred Indian languages and dialects. Our subjective quality study, which is based on this data, supplies much needed cultural, visual, and language diversification to the overall shareable corpus of video quality data. We expect that this new data resource will also allow for the development of systems that can predict the perceived visual quality of Indian social media videos, and in this context, control scaling and compression protocols for streaming, provide better user recommendations, and guide content analysis and processing. We demonstrate the value of the new data resource by conducting a study of leading blind video quality models on it, including a simple new model, called MoEVA, which deploys a mixture of experts to predict video quality.

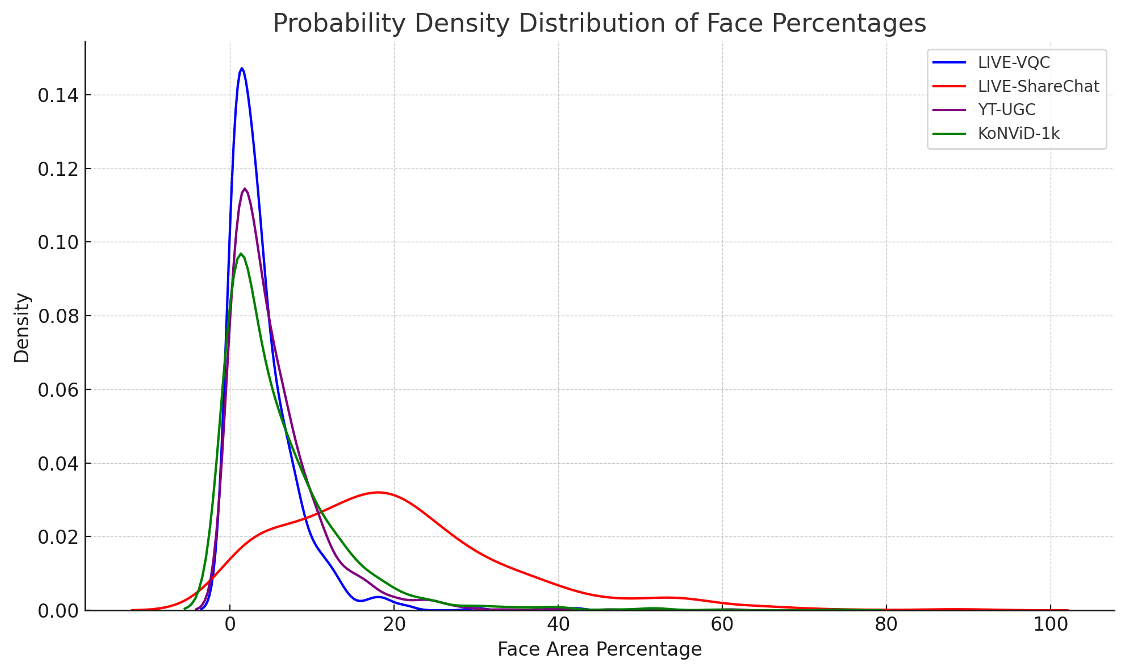

(b) Distribution of Face Area Percentages. The LIVE-ShareChat videos (red) show a significantly higher concentration of face close-ups compared to other datasets (KoNViD-1k, LIVE-VQC, YouTube-UGC), confirming the prevalence of portrait-mode selfie videos.

The cultural videos in the LIVE-ShareChat IUGC-VQA database have divergent characteristics, there being a higher density of videos having lower SI, TI, CI, and Sharpness as compared to the videos in LIVE-VQC, KoNViD-1k, and especially YouTube-UGC. This difference is also observed on the t-SNE features, where the LIVE-ShareChat videos form a separate cluster having little overlap with the features from the other datasets.

(b) t-SNE visualization of VGG-19 deep features. The LIVE-ShareChat videos form a distinct cluster with little overlap, demonstrating that the visual characteristics of Indian user-generated content are unique and divergent from existing Western-centric datasets.

It is evident that features derived from the LIVE-ShareChat videos exhibit the least coverage of SI-TI space, whereas Youtube-UGC shows the most coverage. These cultural videos have simpler content with less "action". The LIVE-ShareChat database contains many videos with face close-ups, quite unlike other datasets where the variation of depth of field and types of content is much higher.

We are making the LIVE-ShareChat Video Quality Assessment Database available to the research community free of charge. If you use this database in your research, we kindly ask that you to cite our paper and website listed below:

- S. Mishra, M. Jha, A. C. Bovik, "Subjective and Objective Analysis of Indian Social Media Video Quality", arXiv:2401.02794 [Arxiv]

- S. Mishra, M. Jha, A. C. Bovik, "LIVE-ShareChat Video Quality Assessment Database," Online: https://live.ece.utexas.edu/research/LIVE-ShareChat-VQA/index.html, 2024.

Download Link Here ! Please fill the Google Form to get access to the database.

Database Description

The LIVE-ShareChat database contains 600 videos sampled from a publicly available set of 20,000 videos on the ShareChat website. The videos were pre-labeled by ShareChat video quality engineers with annotations pertaining to quality issues commonly found in user-generated content, such as jitter and blur, abnormal lighting, excess camera movement, etc. We ensured that each type of annotated issue was well represented. The dimensions of each video depend on the camera specifications, the settings during capture, and any editing by the user. The heights of the original superset of videos varied between 528 to 5428 pixels, while the widths varied between 320 to 2420 pixels. To make our ultimate data more amenable for training and processing by existing learning architectures, among these we only used videos having heights between 900 to 1500, and widths between 500 to 800 pixels. All of the videos have heights greater than widths, making them suitable for viewing in portrait mode, which is preferred on social media platforms like ShareChat, Instagram, TikTok, etc. The videos were selected to have durations lying between 10 and 65 seconds, then were clipped to 8 seconds. This choice allowed us to present more different contents to each user while reducing temporal quality variations, making it easier for the subjects to provide overall video quality judgments.

Investigators

The investigators in this research are:

- Sandeep Mishra ( sandy.mishra@utexas.edu ) -- Graduate student, Dept. of ECE, UT Austin.

- Mukul Jha ( mukul@sharechat.co ) -- Machine Learning Engineer, ShareChat Inc., India.

- Alan C. Bovik ( bovik@ece.utexas.edu ) -- Professor, Dept. of ECE, UT Austin

Copyright Notice

-----------COPYRIGHT NOTICE STARTS WITH THIS LINE------------

Copyright (c) 2024 The University of Texas at Austin

All rights reserved.

Permission is hereby granted, without written agreement and without license or royalty fees, to use, copy, modify, and distribute this database (the videos, the results and the source files) and its documentation for any purpose, provided that the copyright notice in its entirety appear in all copies of this database, and the original source of this database, Laboratory for Image and Video Engineering (LIVE,

http://live.ece.utexas.edu

) at the University of Texas at Austin (UT Austin,

http://www.utexas.edu

), is acknowledged in any publication that reports research using this database.

The following paper/website are to be cited in the bibliography whenever the database is used as:

- S. Mishra, M. Jha, A. C. Bovik, "Subjective and Objective Analysis of Indian Social Media Video Quality", arXiv:2401.02794 [Arxiv]

- S. Mishra, M. Jha, A. C. Bovik, "LIVE-ShareChat Video Quality Assessment Database," Online: https://live.ece.utexas.edu/research/LIVE-ShareChat-VQA/index.html, 2024.

IN NO EVENT SHALL THE UNIVERSITY OF TEXAS AT AUSTIN BE LIABLE TO ANY PARTY FOR DIRECT, INDIRECT, SPECIAL, INCIDENTAL, OR CONSEQUENTIAL DAMAGES ARISING OUT OF THE USE OF THIS DATABASE AND ITS DOCUMENTATION, EVEN IF THE UNIVERSITY OF TEXAS AT AUSTIN HAS BEEN ADVISED OF THE POSSIBILITY OF SUCH DAMAGE.

THE UNIVERSITY OF TEXAS AT AUSTIN SPECIFICALLY DISCLAIMS ANY WARRANTIES, INCLUDING, BUT NOT LIMITED TO, THE IMPLIED WARRANTIES OF MERCHANTABILITY AND FITNESS FOR A PARTICULAR PURPOSE. THE DATABASE PROVIDED HEREUNDER IS ON AN "AS IS" BASIS, AND THE UNIVERSITY OF TEXAS AT AUSTIN HAS NO OBLIGATION TO PROVIDE MAINTENANCE, SUPPORT, UPDATES, ENHANCEMENTS, OR MODIFICATIONS.

-----------COPYRIGHT NOTICE ENDS WITH THIS LINE------------

Back to Quality Assessment Research page